ML Observability Model Types

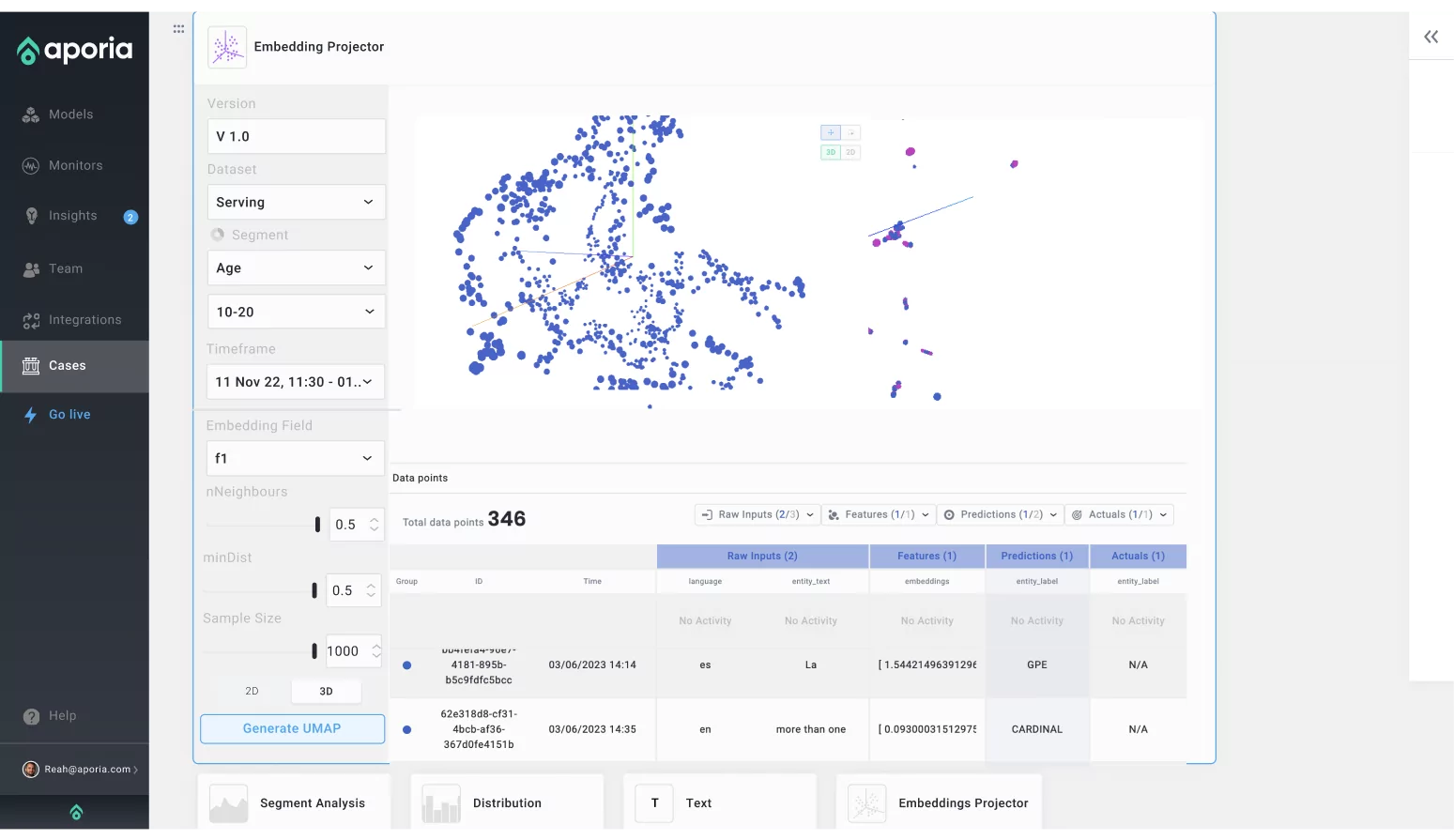

Monitor and investigate your embeddings to optimize the way your model processes language, striving for more in-depth and nuanced understanding.

The good stuff to know about Aporia’s ML Observability

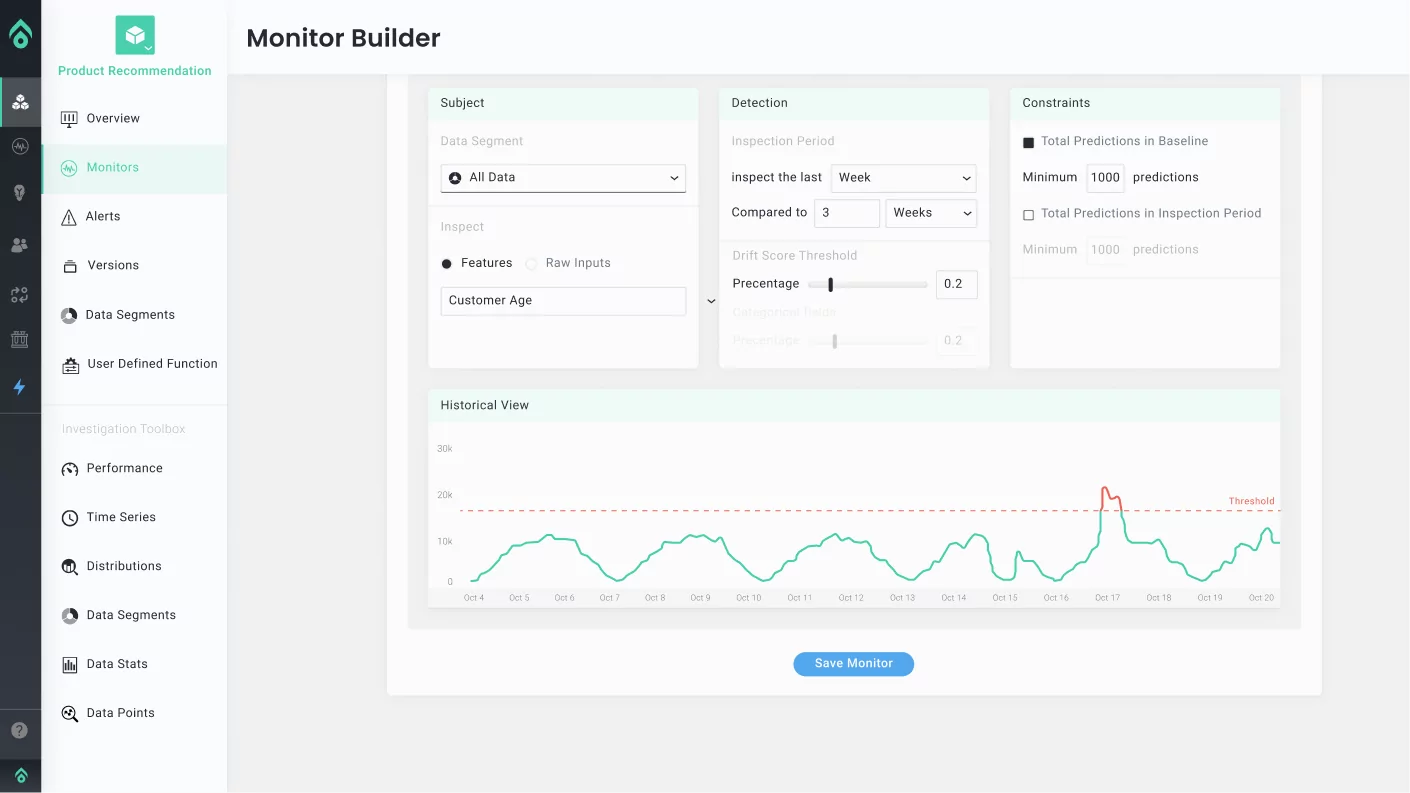

Model monitors & alerts

Instant observabilityGet live alerts in Slack, MS Teams, or email when Aporia detects drift in image classifiers, object recognition biases, pixel-level data integrity issues, or faltering precision and recall in your Computer Vision models.

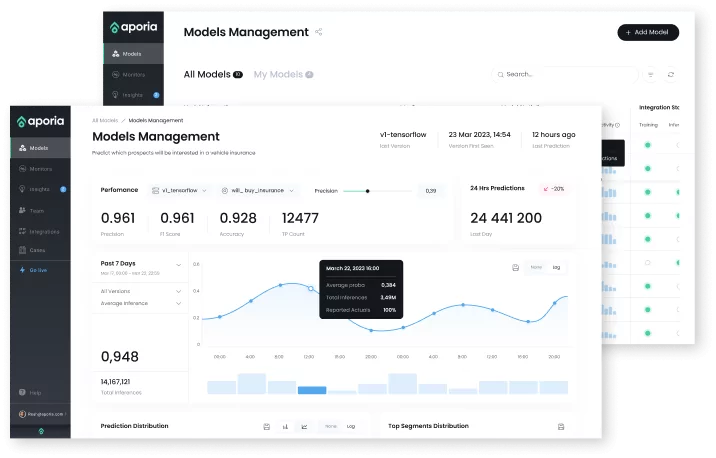

ML dashboards & visibility

Real-time view of model healthGain a centralized view of all your vision models under a single hub. Track model activity, image classification trends, visual data behavior, and performance metrics specific to object detection and image segmentation.

Production IR & Explainability

Effortless InvestigationSeamlessly investigate root causes in a collaborative, notebook-like environment. Perform segment and drift analyses, visualize features, generate embedding projections, and decipher the cryptic layers of Computer Vision with explainability insights.

Book a demo to get started

Join the Fortune 500 companies that trust in Aporia platform for their ML models.

Book a demo