How to Build an End-To-End ML Pipeline With Databricks & Aporia

This tutorial will show you how to build a robust end-to-end ML pipeline with Databricks and Aporia. Here’s what you’ll...

Aporia has been acquired by Coralogix, instantly bringing AI security and reliability to thousands of enterprises | Read the announcement

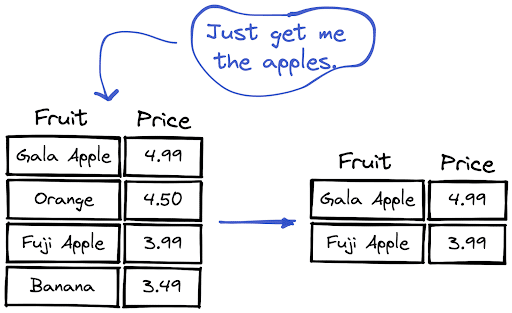

One of the commonly used methods for filtering textual data is looking for a substring. In this how-to article, we will learn how to filter string columns in Pandas and PySpark by using a substring.

We can use the contains method, which is available through the str accessor.

df = df[df["Fruit"].str.contains("Apple")]Letter cases are important because “Apple” and “apple” are not the same strings. If we are not sure of the letter cases, the safe approach is to convert all the letters to uppercase or lowercase before filtering.

df = df[df["Fruit"].str.lower().str.contains("apple")]PySpark also has a contains method that can be used as follows:

from pyspark.sql import functions as F

df = df.filter(F.col("Fruit").contains("Apple"))Letter cases cause strings to be different in PySpark too. We can use the lower or upper function to standardize letter cases before searching for a substring.

from pyspark.sql import functions as F

df = df.filter(F.lower(F.col("Fruit")).contains("apple"))

This tutorial will show you how to build a robust end-to-end ML pipeline with Databricks and Aporia. Here’s what you’ll...

Dictionary is a built-in data structure of Python, which consists of key-value pairs. In this short how-to article, we will...

A row in a DataFrame can be considered as an observation with several features that are represented by columns. We...

DataFrame is a two-dimensional data structure with labeled rows and columns. Row labels are also known as the index of...

DataFrames are great for data cleaning, analysis, and visualization. However, they cannot be used in storing or transferring data. Once...

In this short how-to article, we will learn how to sort the rows of a DataFrame by the value in...

In a column with categorical or distinct values, it is important to know the number of occurrences of each value....

NaN values are also called missing values and simply indicate the data we do not have. We do not like...