How to Build an End-To-End ML Pipeline With Databricks & Aporia

This tutorial will show you how to build a robust end-to-end ML pipeline with Databricks and Aporia. Here’s what you’ll...

Aporia has been acquired by Coralogix, instantly bringing AI security and reliability to thousands of enterprises | Read the announcement

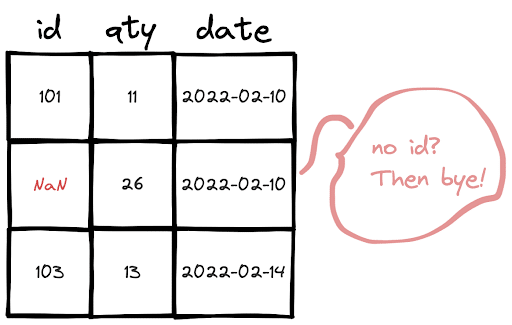

In this short how-to article, we will learn how to drop rows in Pandas and PySpark DataFrames that have a missing value in a certain column.

The rows that have missing values can be dropped by using the dropna function. In order to look for only a specific column, we need to use the subset parameter.

df = df.dropna(subset=["id"])Or, using the inplace parameter:

df.dropna(subset=["id"], inplace=True)It is quite similar to how it is done in Pandas.

df = df.na.drop(subset=["id"])For both PySpark and Pandas, in the case of checking multiple columns for missing values, you just need to write the additional column names inside the list passed to the subset parameter.

This tutorial will show you how to build a robust end-to-end ML pipeline with Databricks and Aporia. Here’s what you’ll...

Dictionary is a built-in data structure of Python, which consists of key-value pairs. In this short how-to article, we will...

A row in a DataFrame can be considered as an observation with several features that are represented by columns. We...

DataFrame is a two-dimensional data structure with labeled rows and columns. Row labels are also known as the index of...

DataFrames are great for data cleaning, analysis, and visualization. However, they cannot be used in storing or transferring data. Once...

In this short how-to article, we will learn how to sort the rows of a DataFrame by the value in...

In a column with categorical or distinct values, it is important to know the number of occurrences of each value....

NaN values are also called missing values and simply indicate the data we do not have. We do not like...